That we have reached a stage in additive manufacturing (AM) where machine learning and artificial intelligence (AI) are recognized as critical functionalities reflects how far our understanding of additive has evolved in the recent past. As you can read elsewhere (see other stories on this site here and here), the variables for achieving the desired outcomes in additive manufacturing are potentially too numerous and complex to master through trial-and-error alone. However—and this became clear to me during a recent visit to GE’s Global Research Center in Niskayuna, New York—we are reaching a nexus where computational power and AI frameworks are robust enough to meet and overcome this complexity. And with the recent announcement that GE is outfitting its Concept Laser M2 additive machines with an industrial internet platform that will enable machine learning at the machine, one of the world’s foremost leaders in AM is betting long on this technology.

GE’s Global Research Center (GRC) in Niskayuna is part of a network of technology centers GE operates around the world. Founded in 1900 as the first industrial research lab in the United States, it is, by far, the oldest. The legacy of Thomas Edison looms large here—so large that the Global Research Center was modeled on Edison’s famous laboratory complex in Menlo Park, New Jersey. Today, the GRC houses roughly 2,000 employees on a campus teeming with scientists and engineers with specialties that range from materials and metallurgy, to laser physics, to additive manufacturing, to digital applications and computer programming, to any technology that informs the industries in which GE competes. During my visit, a number of GE scientists at the campus told me that this was the core asset of the GRC. They can pick up a phone and form “instant teams” with some of the world’s foremost experts across science and engineering disciplines.

This context is important not as an illustration of GE’s size and scope, but for understanding where machine learning fits into the company’s business strategy, and how GE is laying the groundwork for it today. It all begins with what GE calls Predix.

In short, Predix is GE’s operating system for the industrial internet. It has been open and available to outside companies since 2015, and is the core digital platform on which data from industrial assets—from oil pumps to locomotives to wind turbines and now to 3D printers—resides. It is also where data processing and analysis take place, either in the cloud or on a computer control system located on or near the asset itself. At GE, the latter configuration is referred to as the Predix Edge. (The computations and analysis take place locally, at the “edge” of the network.) To understand how these platforms and capabilities are used at GE today, and how they are beginning to operate on Concept Laser machines, let’s illustrate this digital ecosystem using a long-tested GE asset as an example: a gas turbine engine for a power plant.

The main characteristic that drives the profitability of a gas turbine is reliability. At a typical electric power plant, service outages for a single gas turbine are typically scheduled when the weather is neither too hot nor too cold—a condition that minimizes the amount of electricity that will be lost during the outage. In other words, the service outage is a fixed event, and the operator wants to use up all of the life in the turbine until that scheduled outage. The operator also wants the turbine to run as efficiently as it can until that event. The hotter the turbine runs, the more overall efficiency the operator gains from it. Of course, the hotter it runs, the faster its life degrades.

But if you have sophisticated controls acting as the brain of the turbine, you can ask those controls to perform three essential tasks that can inform decisions about when to schedule the outage, how hot to run the turbine and other variables that may affect your decision. In short, you can ask the controls to see, think and do. Sensors located on the turbine can collect information (see); the CPU can process the information and compare it to how the machine should be behaving (think); and then send signals to the actuators on the machine that affect motor speed, torque, and so on (do).

The data from the local controls can then be combined with data in the cloud that has been collected from other gas turbines around the world. Machine learning now can use all of that data to inform decisions, such as: should the turbine burn hotter or cooler? Should it burn a little bit below baseload, or should it be over-fired to squeeze out more electricity? What is the current price of electricity? What is the weather forecast? What are the other turbines doing? How much life does the turbine have left?

In this example, machine learning and the digital platform on which it resides are key components. But so is the industry domain knowledge—the engineers who understand the materials of the turbine blade, and who understand creep and spallation and degradation. It’s this same combination of processing power, machine functionality and industry domain expertise that GE says it’s bringing to the field of additive manufacturing. The key difference, of course, is that additive technologies are nascent, especially in comparison to gas turbines or locomotives. But when you have scientists on staff like Bill Carter, who co-wrote a research article in 1992 about how additive manufacturing will be standard practice, or Marshall Jones, who at 76 still works at the Global Research Center after a career developing and perfecting high-powered lasers, you may fairly be considered ahead of the curve.

Still, machine learning for additive is a new application within a new field, and the work being done at the GRC reflects that. And while you could say that the use of GE’s Predix Edge and Cloud systems won’t cost GE Additive anything since the technology is already in use for wind and gas turbines, MRI machines and other GE assets, the data that will inform those systems still needs to be generated. In other words, GE is performing the same kinds of tests that you can read about elsewhere. The big difference, of course, is that it’s GE, and GE has a very deep bench.

100 Percent Yield

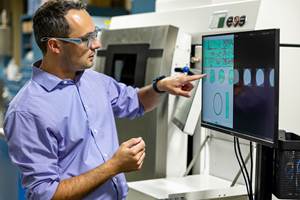

Brent Brunell, the Edge Mission Leader at Global Research, leads the team that works across GE’s industrial businesses to secure and connect the company’s assets from the Edge to the Predix Cloud. Connecting additive machines—preparing them to access and interact with digital libraries of additive information and AI-driven decision making—is also a key focus of this mission. During my visit, members of GE’s Additive team at the GRC demonstrated their side of the equation—stocking the library itself.

As the additive materials mission leader at GRC, Joseph Vinciquerra helps lead the Additive Research Lab team responsible for populating a digital library of additive build parameters that will reside in the Predix Cloud. These parameters, along with those collected from Concept Laser machines at the facility, will be analyzed through machine learning to inform additive machine operators during their build operations. The goal, Vinciquerra says, is to achieve “100 percent yield,” a state of additive perfection in which every build is without flaw.

“The idea is that the machine has a compensation strategy based on what the computer vision sees. That’s the long-term goal here.”

To accomplish this, Vinciquerra and his team members, which during my visit included Laura Dial, senior engineer, and Scott Oppenheimer, process engineer, print simple geometric shapes such as metal test bars and rods. High-resolution cameras record each layer of the build, catching flaws and imperfections that would be imperceptible to the human eye. CT scans then record any flaws within the finished part, and all of that data is uploaded to the Predix system, which is tasked with using machine-learning algorithms to correlate those flaws to conditions on the powder bed that existed at the moment the flawed layer was produced. These kinds of tests are run time and time again as a means of training the system. But the ultimate goal is larger, and more complex.

Vinciquerra and his team, along with scientists and engineers from GE’s laser group, the computational fluid dynamics group, the materials group and others, are setting out to create a closed-loop system with ingrained defect-spotting capabilities that corrects flaws and potential flaws in real time. If the powder layer is slightly ridged, and if that ridged condition is known to result in unfused powder, for example, the printer will pause to adjust the layer before continuing. “The idea is that the machine has a compensation strategy based on what the computer vision sees,” Vinciquerra says. “That’s the long-term goal here.” In other words, GE’s ultimate goal is to operationalize the “see, think, do” closed-loop system for additive manufacturing.

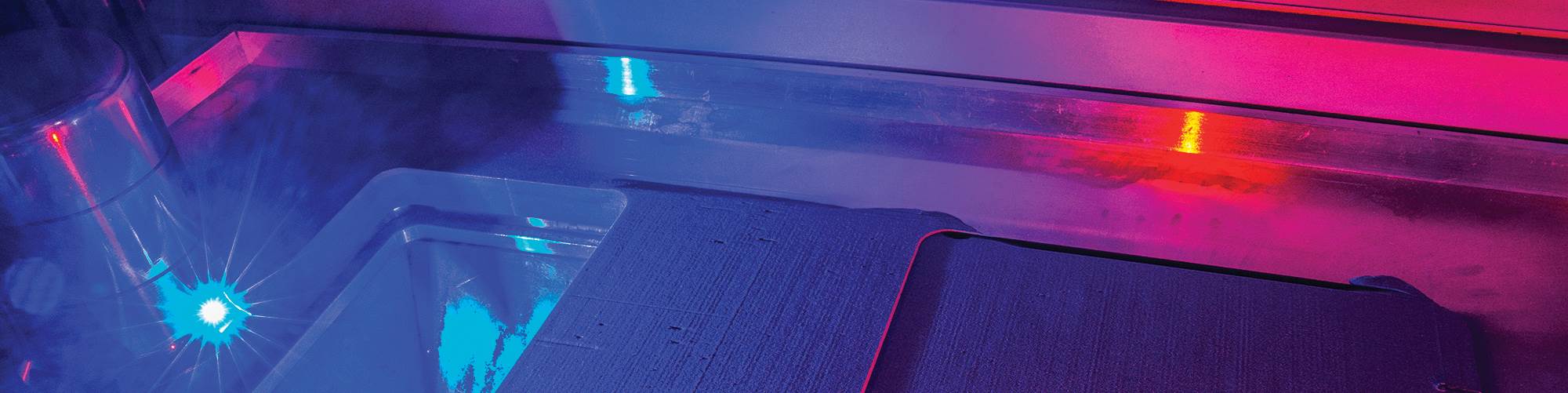

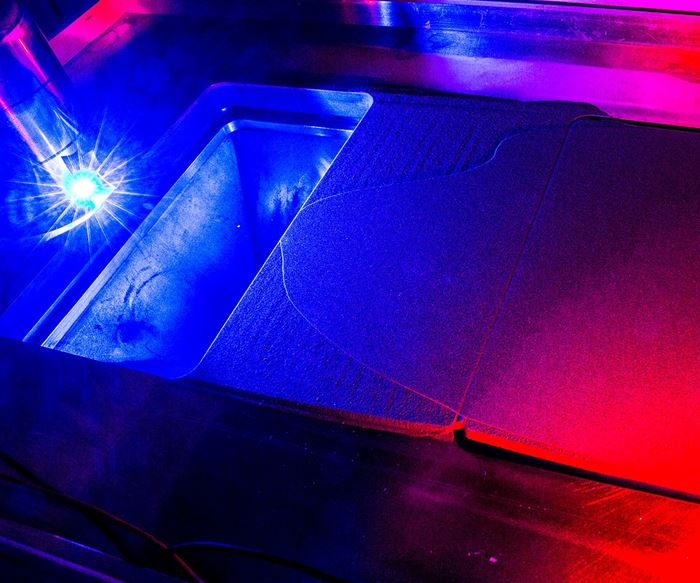

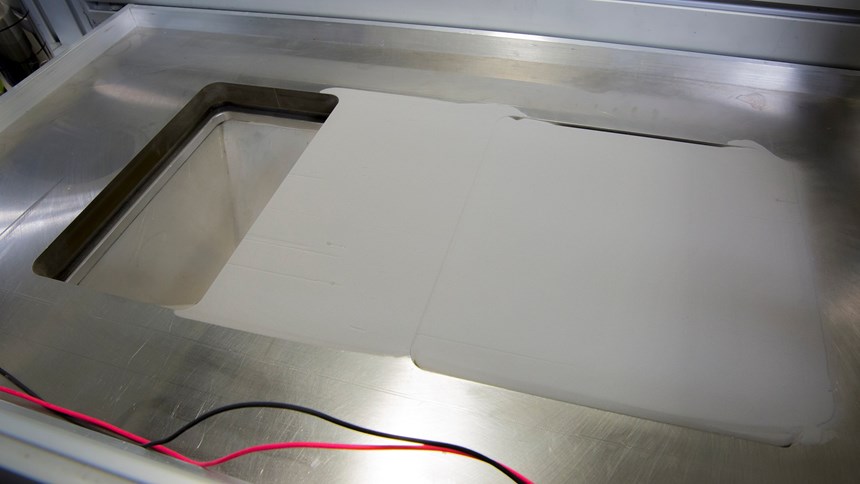

To illustrate an example of the tests being conducted to populate this additive parameter library, Oppenheimer shows me a test rig that has been set up to replicate the Concept Laser powder-bed system. This unit lacks a laser and powder feed system within the chamber, and contains only the build plate, the recoater blade, and the powder bed. A camera is positioned downward at the top of the chamber, and LED lights are positioned at a low, oblique angle to the powder bed. Oppenheimer manually pours a measured amount of powder on the bed, and programs the recoater blade to spread the powder at a high speed. With all of the lab lights turned on, the layer looks even and smooth. But when the lights are turned off, and when Oppenheimer switches on the blue LED lights that are angled low on the powder bed, ripples along the powder suddenly reveal themselves. This is a direct result of running the recoater blade too quickly.

We’re using machine learning to correlate observations in a powder-bed recoating to abnormalities in the final product.

“When we do this exercise on an actual machine,” Vinciquerra says, “we’re capturing images every time we recoat during a build. Those images get correlated to post-build inspection. And we’re using machine learning to correlate observations in a powder-bed recoating to abnormalities in the final product. Abnormalities like porosity, cracks, lack of fusion—the types of things that debit the material properties that we’re after.”

Laura Dial is quick to point out that not every condition like this will result in a flaw, and that it’s important to conduct these tests across a range of settings in order to make those determinations. “If you look just in the ambient light, not much pops out to the eye,” Dial says. “But if you use the oblique angle lighting, you can very clearly see very, very small things in your build. These small things are not necessarily something that causes a problem, but to be able to track them and correlate them to what you see in a microstructure allows you to modify your process with a lot of confidence. To be more production ready. In other words, you can see a lot, and a lot of the things we see actually don’t matter.”

Vinciquerra notes that the first order of his team’s work is to train a machine-learning-based model that will allow the real-time imaging to predict the material properties at the end of a build. If the camera captures an image of streaking, pitting, or any condition among the library of features that indicates a potential problem, a signal can be sent to the machine operator to stop the build and make a correction. But down the road, GE aims to incorporate the informatics that are being relayed from the image analysis into the control system of the machine. Using a machine-learning-based model, if the machine detects a flaw on a single recoat, it can make a fine adjustment on the next layer to fix it. Combined with the research being conducted across laser, materials, computational fluid dynamics and other GE groups that are conducting similar tests, the work represents just a small portion of the resources being placed into machine learning here in Niskayuna.

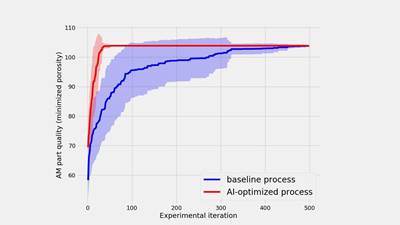

“At GE,” Vinciquerra says, “we spend a tremendous amount of time asking, how do we master a broad set of material systems for additive? How do we understand them enough so we can build high quality parts using the processes we have in powder-bed AM? Machine learning, AI-driven decision making with material science is a channel that we’re forging specifically because of that challenge. There are so many different things to understand in additive today. What are the shortcuts that we can take to get there? We need to leverage these shortcuts, which in no way implies less rigor. It’s just as rigorous as our traditional material science. It’s just that we’ve got to do it faster.”

Related Content

Inspection Method to Increase Confidence in Laser Powder Bed Fusion

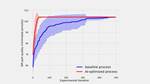

Researchers developed a machine learning framework for identifying flaws in 3D printed products using sensor data gathered simultaneously with production, saving time and money while maintaining comparable accuracy to traditional post-inspection. The approach, developed in partnership with aerospace and defense company RTX, utilizes a machine learning algorithm trained on CT scans to identify flaws in printed products.

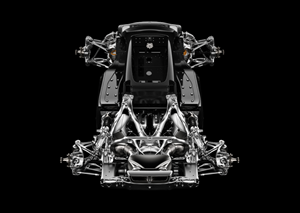

Read MoreDivergent Technologies Eyes High-Volume, Optimized Automotive Production Through Additive

While some automotive OEMs are using additive here and there, Divergent Technologies is basing its vehicles on 3D printed structures.

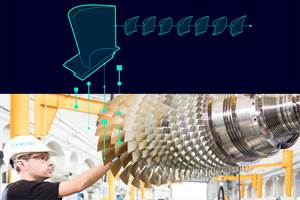

Read MoreSiemens’ Engineering HEEDS AI Simulation Predictor Optimizes Additive Manufacturing

The HEEDS AI Simulation Predictor empowers organizations to take full advantage of the digital twin to optimize products through advanced state-of-the-art artificial intelligence with built-in accuracy awareness.

Read MoreActivArmor Casts and Splints Are Shifting to Point-of-Care 3D Printing

ActivArmor offers individualized, 3D printed casts and splints for various diagnoses. The company is in the process of shifting to point-of-care printing and aims to promote positive healing outcomes and improved hygienics with customized support devices.

Read MoreRead Next

With Machine Learning, We Will Skip Ahead 100 Years

Machine learning or AI will prove vital to the advance of AM. Computational power will enable additive to advance much faster than if it had been invented in an earlier time.

Read More3D Printing Brings Sustainability, Accessibility to Glass Manufacturing

Australian startup Maple Glass Printing has developed a process for extruding glass into artwork, lab implements and architectural elements. Along the way, the company has also found more efficient ways of recycling this material.

Read MoreAt General Atomics, Do Unmanned Aerial Systems Reveal the Future of Aircraft Manufacturing?

The maker of the Predator and SkyGuardian remote aircraft can implement additive manufacturing more rapidly and widely than the makers of other types of planes. The role of 3D printing in current and future UAS components hints at how far AM can go to save cost and time in aircraft production and design.

Read More